AMD acquired Xilinx for AI data centre work, and Intel added AI acceleration to its Xeon data centre CPUs in 2019 it has also bought two startups, Nervana in 2016 for $408 million and Habana Labs in 2019 for $2 billion. Google started making its own chips in 2015 Amazon last year began shifting Alexa’s brains to its own Inferentia chips, after buying Annapurna Labs in 2016 Baidu has Kunlun, recently valued at $2 billion Qualcomm has its Cloud AI 100 and IBM is working on an energy-efficient design. While NVIDIA’s early work has given the GPU maker a head start, challengers are racing to catch up. We need more AI chips and we need better AI chips. More efficient hardware is necessary to chew through more parameters and more data for increased accuracy, but also to keep AI from becoming even more of an environmental disaster – Danish researchers calculated that the energy required to train GPT-3 could have the carbon footprint of driving 700,000km. It cost an estimated $4.6 million to compute, and that’s since been topped by a Google language model with 1.6 trillion parameters. OpenAI’s GPT-3, a deep learning system that can write paragraphs of sensible text, is the extreme example, made up of 175 billion parameters, the variables that make up models.

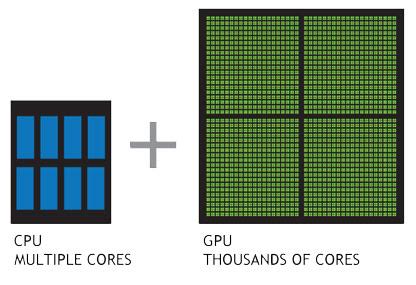

It’s the ground AI researchers stand upon. Ng’s YouTube cat finder, DeepMind’s board game champion AlphaGo, OpenAI’s language prediction model GPT-3 all run on NVIDIA hardware. Virtually all AI milestones have happened on NVIDIA hardware. Nearly 70 per cent of the top 500 supercomputers use its GPUs. It commands “nearly 100 per cent” of the market for training AI algorithms, says Karl Freund, analyst at Cambrian AI Research. In 2019, NVIDIA GPUs were deployed in 97.4 per cent of AI accelerator instances – hardware used to boost processing speeds – at the top four cloud providers: AWS, Google, Alibaba and Azure. Co-founded in 1993 by CEO Jensen Huang, NVIDIA’s major revenue stream is still graphics and gaming, but for the last financial year its sales of GPUs for use in data centres climbed to $6.7 billion. The when and how of it all seems unimportant now that NVIDIA dominates AI chips. “NVIDIA’s position in this market is not an accident,” he says. Indeed, he had been developing GPUs for AI while still a grad student at Berkeley, before joining NVIDIA in 2008. And he did – with just 12 GPUs – proving that the parallel processing offered by GPUs was faster and more efficient at training Ng’s cat-recognition model than CPUs.īut Catanzaro wants it known that NVIDIA didn’t begin its efforts with AI just because of that chance breakfast. GPUs (graphics processing units) are specialised for more intense workloads such as 3D rendering – and that makes them better than CPUs at powering AI.ĭally turned to Bryan Catanzaro, who now leads deep learning research at NVIDIA, to make it happen. “I said, ‘I bet we could do it with just a few GPUs,’” Dally says. The neural network was shown ten million YouTube videos and learned how to pick out human faces, bodies and cats – but to do so accurately, the system required thousands of CPUs (central processing units), the workhorse processors that power computers. Ng was working at the Google X lab on a project to build a neural network that could learn on its own. “He was trying to find cats on the internet – he didn’t put it that way, but that’s what he was doing,” Dally says. Back in 2010, Bill Dally, now chief scientist at NVIDIA, was having breakfast with a former colleague from Stanford University, the computer scientist Andrew Ng, who was working on a project with Google. THERE’S AN APOCRYPHAL story about how NVIDIA pivoted from games and graphics hardware to dominate AI chips – and it involves cats.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed